Members and Collaborators

- Jelena Mirkovic

- Simon Woo

Overview

This project explores a new hybrid cloud computing framework, which allows users to select machines and services from multiple public cloud providers in order to reduce overall operation cost while meeting the job completion time requirements. Cloud computing is a fascinating paradigm to provide the low cost, scalable, and on-demand computing resources and services for variety of applications. Most Cloud computing providers offer the Infrastructure as a service (IaaS), Platform as a service (PaaS), and Software as a service (SaaS). Generally, in the current cloud computing paradigm, cloud users are only allowed to choose computing resources within the same provider. However, each provider offers different computing resources at various prices. In addition, resource performance offered from cloud providers drastically varies with the approximately same cost. In this work, we propose a Hybrid Cloud computing paradigm (HyCloud), where cloud users are allowed to select machines from different cloud service providers to minimize overall task completion time given the task completion time requirements and cost constraints. We construct the optimization problem to allocate best machines from different cloud providers utilizing heuristic based Simulated Annealing (SA) with real machine benchmarks and real use case scenario. In particular, we attempt to characterize when the HyCloud is beneficial considering extra network and multi-cloud processing latency. The simulation results quantify and demonstrate that HyCloud approach provides a better performance with the same cost across different scenarios in nominal latency budget.

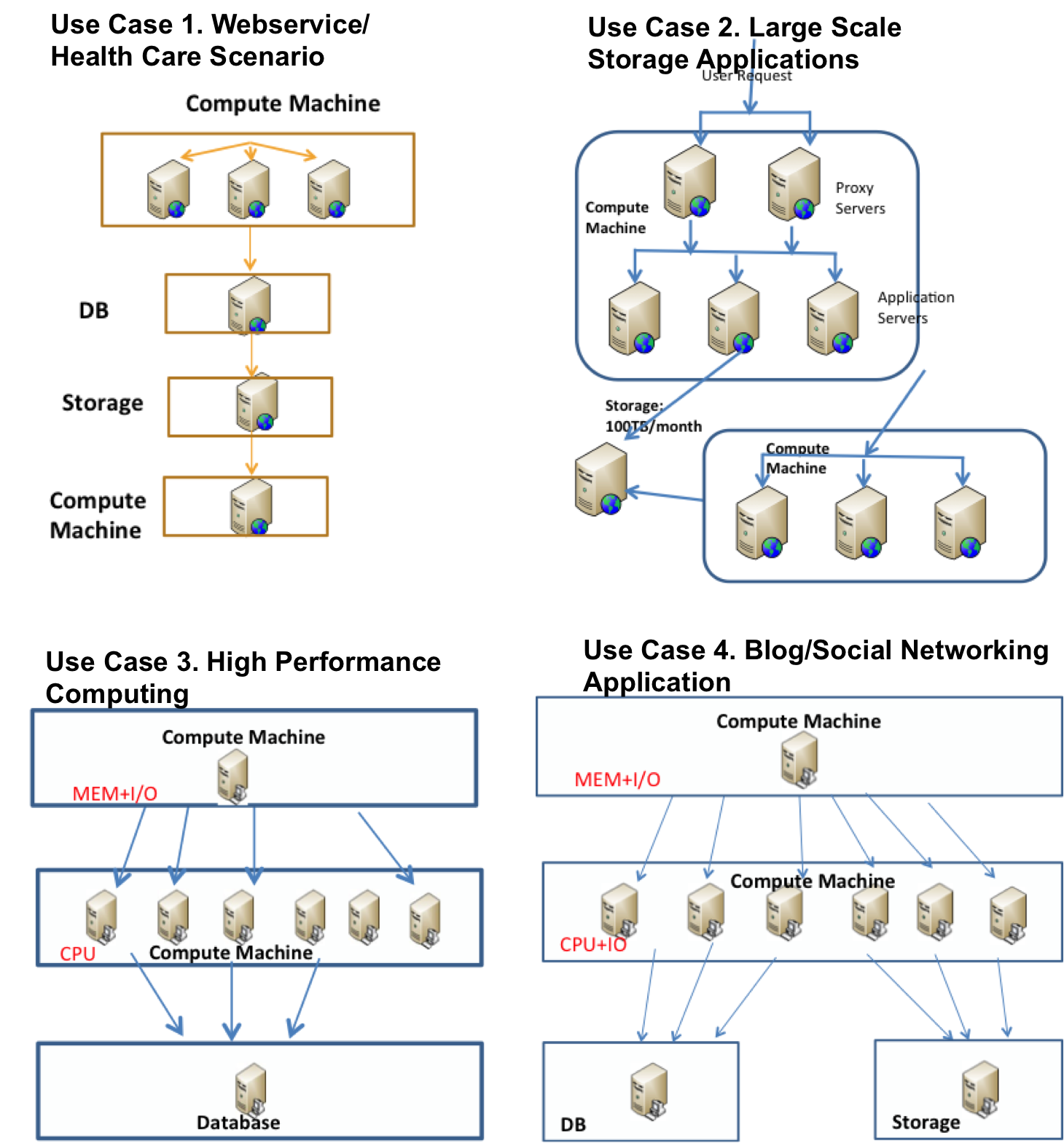

Scenarios

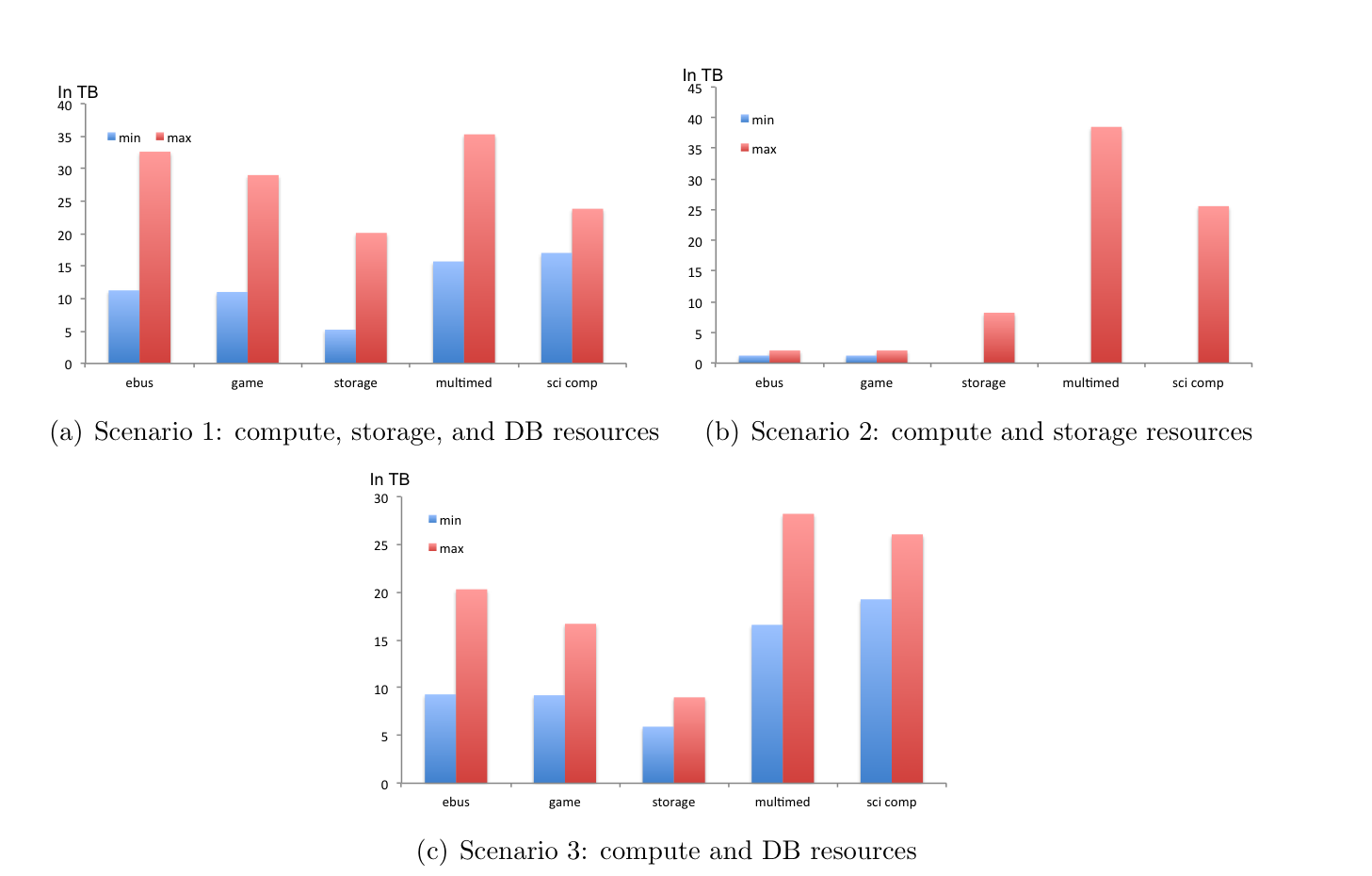

We consider four different scenarios, which depend on how the user applications are distributed and deployed over separate compute, DB, and storage services. The first scenario assumes cloud applications use compute, cloud DB, and cloud storage services. Typically, this scenario is desirable when application providers want to clearly separate compute, DB, and storage tasks, e.g., for reliability. For example, managing and storing user session data in a separate DB and storage from the Web server ensures that rebooting a Web server would not disrupt current user sessions. The second scenario is when cloud applications are deployed over compute and cloud storage services only, and DB tasks are run on compute machines. This scenario applies when application providers want to simultaneously manage compute and DB functions for faster access, while storing data in dedicated cloud storage service for fault tolerance. The third scenario assumes cloud applications use compute and cloud DB services only, and storage tasks are managed on compute machines.

This scenario applies when application providers want to have fast access to stored data and can implement good solutions for fault tolerance themselves, and they want to use cloud DB services for more complex relational DB operations or for dedicated and faster (key, value) pair lookup. The fourth scenario assumes cloud applications use only compute machines for computation, DB, and storage tasks for fast access, both to database information and to stored data. This scenario applies to applications with small user base and simple operations

Evaluation

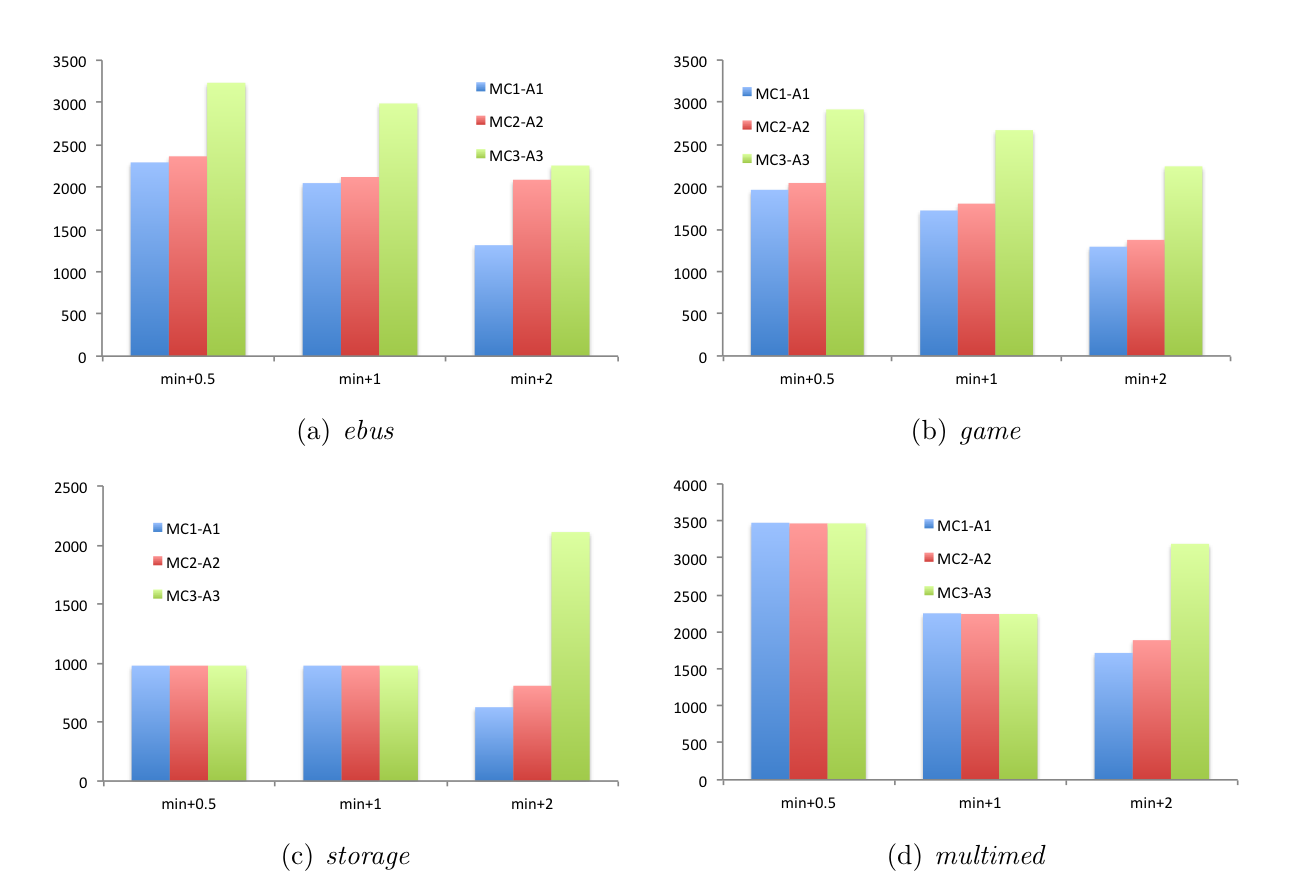

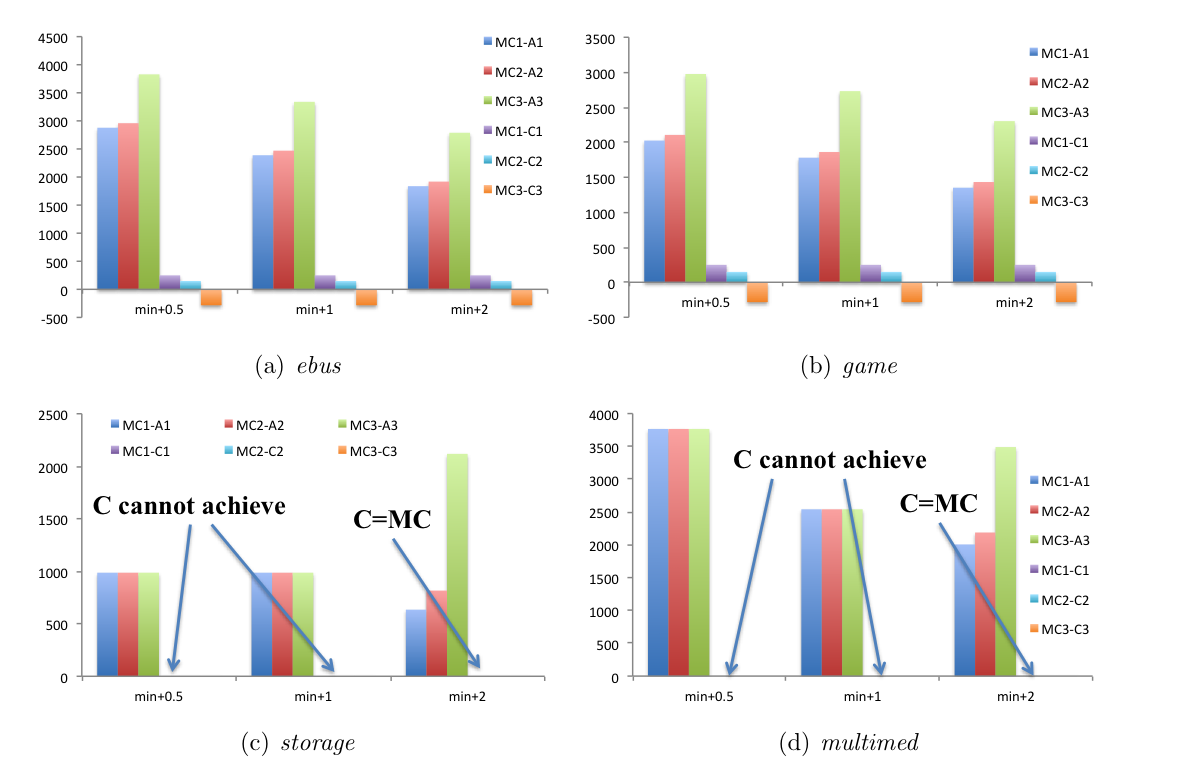

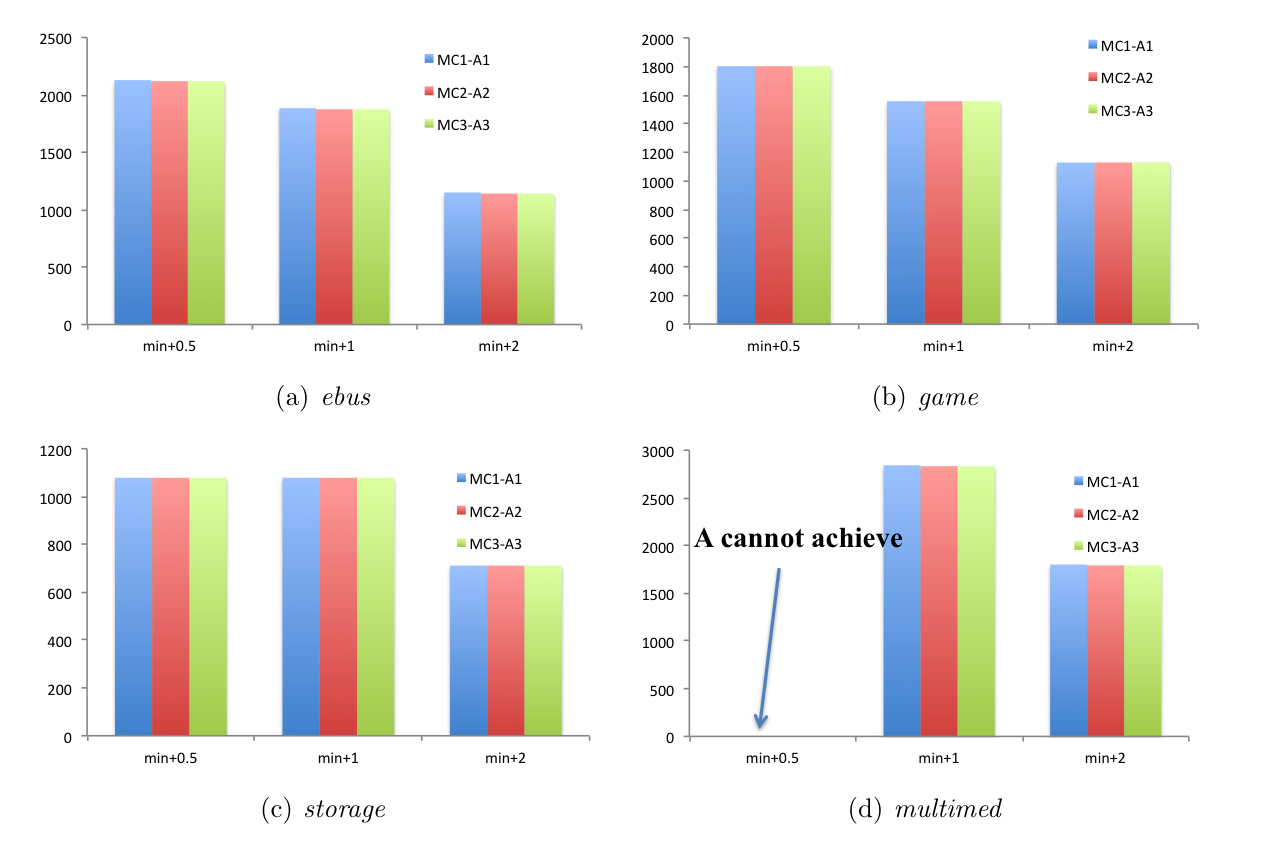

For each scenario, we evaluate e-business, game, storage, multimedia, and scientific computing workloads

Fig. 2. Monthly cost savings with (Compute, Storage) machine types

Fig. 3. Monthly cost savings with (Compute, DB) machine types

Fig. 4. Allowable Maximum Inter-Cloud Data Transfer Amount

Conclusion

With increased differentiation in cloud providers' service offers, allocation of application components on multiple clouds becomes an attractive option. Our evaluation shows that such allocation provides significant cost savings over single-cloud allocations in a variety of realistic cloud use scenarios. While multi-cloud allocation carries additional delay and cost due to inter-cloud communication, its performance gains and cost savings are still significant. In particular, we have observed that the cost benefit of multi-cloud is substantial when applications are deployed over separate and dedicated compute, DB, and storage services.